Trending

Trending

Trending

Trending

Trending

Trending

Trending

Stories

Stories

Stories

Stories

Stories

Stories

Tips

Tips

Tips

Tips

Tips

Tips

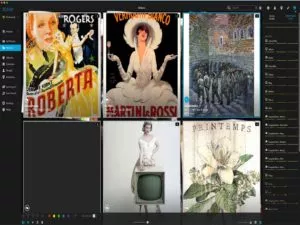

In a world of digital clutter, Mylio Photos makes it easy to organize and enjoy the photos of a lifetime.

Download Now